AI Deep Research Explained

How AI Research Assistants Actually Work

What separates a quick Google search from genuine research? When you search, you get a list of links. When you research, you follow a trail of questions, cross-reference sources, challenge assumptions, and synthesize insights from multiple angles. Real research is iterative – each answer leads to new questions, and each source reveals gaps that need to be filled.

Until recently, AI could only do the equivalent of memorizing an encyclopedia. Ask it something, and it would either know the answer from training or make something up. But a new generation of AI assistants has learned to research like humans do – following hunches, checking facts, building understanding piece by piece.

Instead of simple retrieval, these systems conduct genuine investigations. They question, explore, verify, and synthesize. When you ask a complex question, they break it down into sub-problems, chase down multiple leads, cross-check their findings, and weave everything together into a coherent answer. It's the difference between looking something up and actually figuring it out.

This represents a fundamental shift in AI capabilities – from static knowledge to dynamic discovery. Let's explore how these AI research companions work at an algorithmic level to understand the sophisticated machinery behind their investigative powers.

Query Understanding

The first step happens the moment you hit "enter" on your question. Modern AI assistants understand what you're asking for, treating your query as more than just keywords.

Think of a skilled librarian at an info desk. You ask a question, and the librarian first clarifies what you really need. Are you looking for a specific fact? A broad explanation? Current events? Similarly, AI assistants use advanced language understanding to parse your request's intent.

If you ask "What's the capital of that country that changed its name last week?", the system detects this is a factual, up-to-date question – prime for web search. But if you asked "Write a poem about the moon," it realizes no external research is needed.

Systems like Perplexity route queries to appropriate processes based on intent. Grok decides whether a live web search is necessary – if you're asking about trending topics, it reaches out to the web and even searches recent posts on X/Twitter. For common knowledge, it might skip the web entirely.

This intent analysis sets the game plan: deciding if and how the assistant should dive into external research.

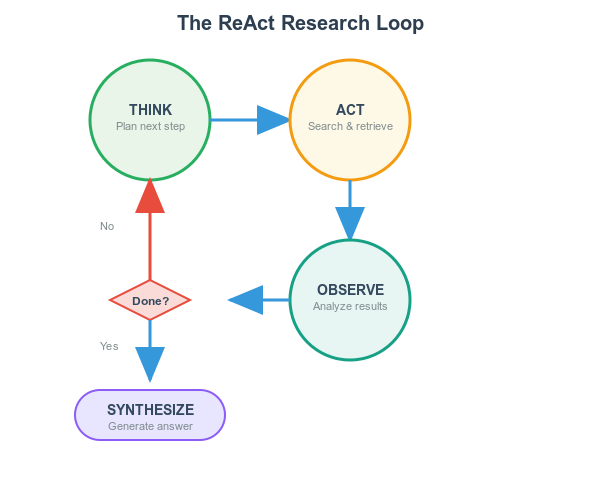

The Research Loop

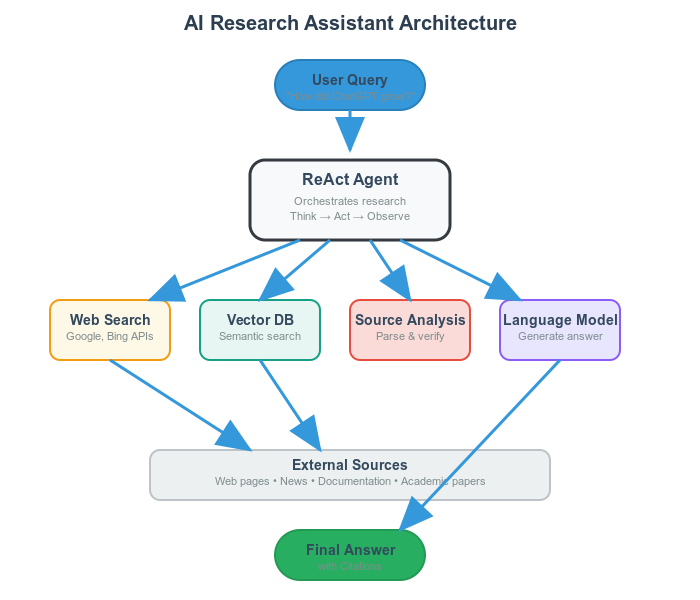

Once the AI decides external research is needed, it engages in a deliberative loop called the ReAct pattern (Reason+Act) – much like how a human researcher approaches complex queries.

Imagine investigating a tough question. You might think: "What exactly am I looking for? Maybe I should first find data on X. Let's search for X... okay, got something. Now that suggests I should look up Y... now combine X and Y to get the answer."

AI research assistants do almost the same thing in a blazing fast, iterative loop:

Thought (Reason) – The AI ponders what to do next. "The user is asking about ChatGPT's user growth in its first year. I should search for ChatGPT's launch details first."

Action – It performs an action like Search("ChatGPT launch date user statistics"). The assistant generates a query and hits the search engine.

Observation – Results come back. "ChatGPT launched in November 2022 and reached 100 million users in just two months..."

Next Thought – With new information, it updates reasoning. "I have the launch timing, but I need more specific data about the full first year. Let me search for detailed growth metrics."

Next Action – It performs another search: Search("ChatGPT user growth 2023 statistics milestone").

This continues until the AI has enough information to provide a complete answer. The ReAct approach turns the language model into an agent that can think aloud and use tools, handling complex queries while avoiding hallucinations that occur when it doesn't check facts.

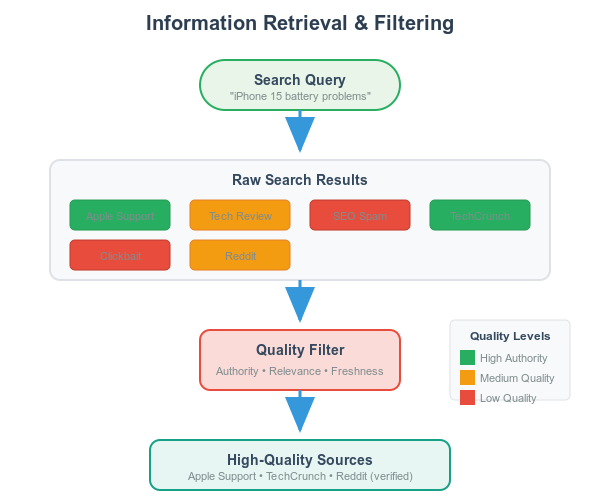

Information Retrieval

The "Act" part of the loop involves sophisticated retrieval mechanisms combining traditional search with modern AI.

Crafting Effective Searches

The assistant turns your request into good search queries, often rephrasing or adding context. If your question is vague, it might add specific keywords. This query crafting is guided by the agent's reasoning – it knows what it's looking for at each step.

External vs. Internal Sources

Many assistants call out to web search APIs (Bing, Google) for current results. Others, like Perplexity, also leverage their own indexed content with web crawlers (PerplexityBot) that index pages for freshness.

Behind the scenes, these indexes often use vector search technology. Content is pre-processed into numerical embeddings, allowing the system to quickly find semantically relevant documents. A query like "iPhone 15 battery problems" gets converted into an embedding that can pull up conceptually matching documents, even if they don't share exact keywords.

Ranking and Filtering Results

Web content varies wildly in quality. Advanced assistants use ranking algorithms to prioritize trustworthy, relevant sources. Perplexity explicitly "prioritizes authoritative, trustworthy sources and de-emphasizes heavily SEO-optimized or biased content," favoring academic journals and reputable news sites over random blogs.

This quality filtering ensures the AI's answer is built on solid information, not questionable data.

Source Analysis

When an AI "opens" a webpage, it parses text content and looks for parts relevant to the question – like doing a super-fast ctrl+F search across multiple documents simultaneously.

The assistant uses the language model to summarize or extract key points from each source. If one document is a Wikipedia article, the AI zeros in on the specific section and condenses the relevant paragraph into bullet points.

Good research AIs cross-verify information across sources instead of trusting any single source. If Source A and Source B both report that Neptune has 14 moons, the assistant gains confidence this is reliable. If there's a discrepancy, it might dig further or give a nuanced answer.

This cross-checking makes retrieval-augmented systems more factual than models that rely purely on memory.

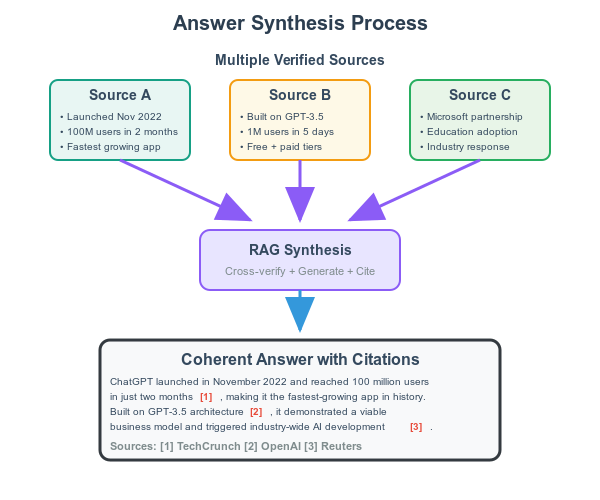

Answer Synthesis

Now comes the magic: synthesizing gathered facts into a coherent answer. With relevant information compiled, the AI's job is weaving them into a single, clear response.

Think of writing an article with all your reference books open in front of you. The system feeds curated information into the language model alongside the original question, essentially saying: "Here's the question, and here are relevant facts from sources A, B, C... Now use this to answer."

This is Retrieval-Augmented Generation (RAG): the model's knowledge is augmented with up-to-date external info. Because the answer is generated with source materials in mind, responses tend to be grounded in retrieved facts rather than potentially outdated memory.

Throughout this process, transparent systems attach citations to specific statements. Each important fact gets a numbered footnote linking back to its source, allowing verification and boosting trust.

System Architecture

These research assistants consist of multiple components orchestrated together, like a chef coordinating specialized sous-chefs. The "head chef" is the agent logic (following ReAct), while "sous-chefs" are tools: search APIs, web page readers, the main LLM, and context managers.

When you ask something, the system might use a small model to decide "I should use the web for this," then the large LLM generates the search query, the search tool executes it, and a parsing module reads results. All these parts communicate in a loop.

Some systems use multiple models with different strengths – Perplexity can route queries to different backbone models (GPT-4o for complex reasoning vs. faster models for simple questions). Others have fallback verification models that double-check if answers truly address the question.

User Experience Benefits

All these algorithmic choices create several key benefits:

Up-to-Date Knowledge – The AI provides information about recent events where older models would shrug with "I don't know that." Breaking news from an hour ago becomes accessible.

Higher Accuracy & Less Hallucination – By actively looking up facts and cross-verifying them, answers become more grounded in reality. The system does an "open-book exam" instead of guessing from memory.

Transparency through Citations – Source citations let you verify information and boost confidence. It's like reading a well-researched article with footnotes.

Contextual Responses – The multi-step approach ensures the AI zeros in on your specific question, customizing answers by fetching exactly what's needed rather than regurgitating generic responses.

Lightning Speed – Despite multiple searches, reading several articles, and writing an answer, everything returns pretty fast thanks to optimized backends and parallel processing.

Finally! A *single unified* explanation of what RAG is, how it makes AI output better, and why any of it is valuable to humans.

Thank you! Subscribed:)

I enjoyed this read. Thank you.